A global enterprise operating in a data-intensive environment faced increasing pressure to deliver accurate, consistent, and timely data for analytics and business decision-making. With high data volumes, complex transformations, and frequent pipeline executions, ETL testing had become a critical bottleneck in their delivery lifecycle.

Therefore, the objective was clear: modernize ETL testing and data quality validation to improve speed, reliability, and scalability.

About the Client

A global enterprise operates in a data-intensive environment where accurate, consistent, and timely data is critical for analytics and business decision-making. Specifically, the engagement involved high data volumes, complex transformations, and frequent pipeline executions across multiple data sources and targets.

The Challenge

Initially, the client performed ETL testing entirely through manual efforts. Consequently, several challenges impacted speed, reliability, and scalability:

- Manual validation of data across multiple source and target tables consumed significant QA resources.

- Moreover, repetitive checks such as record counts, duplicates, and schema validation were repeated across every test cycle.

- As a result, working with large datasets increased the risk of human error.

- Furthermore, each test run took up to 10 days to complete, creating long execution cycles.

- Finally, as new tables and pipelines were added, scalability became severely limited.

Although the QA team was experienced, the lack of automation affected speed, reliability, and scalability. Thus, a transformation was urgently needed.

The Solution

To address these challenges, SDET Tech introduced a scalable, automation-driven ETL testing framework. This solution leveraged modern data platforms and quality engineering best practices.

1. Intelligent ETL Test Automation

First, the team designed and implemented an ETL automation framework using Databricks. This framework automates foundational validations, for example:

- Record count validation

- Metadata and schema checks

- Duplicate detection

- Source-to-target data reconciliation

2. Advanced Data Quality Coverage

In addition, the framework expanded validations to include:

- Primary key validation

- Transformation and business rule validation

- Null value and data consistency checks

3. Framework Design & Structure

To ensure maintainability, reusability, and ease of adoption, SDET Tech designed the framework with a clean, modular, and configuration-driven structure. Here is the layout:

/etl_tests/ - config/ - test_config.json # Centralized configuration for paths and parameters - test_cases/ - test_count.py # Row count validations - test_schema.py # Metadata and schema validation - test_duplicates.py # Duplicate data checks - test_data_validation.py # Business rule and transformation validations - utils/ - run_tests.py # Entry point to trigger ETL validations

Consequently, this structure enabled:

- Quick onboarding for new teams

- Easy extensibility for adding new validations

- Reuse across multiple projects with minimal configuration changes

- Seamless integration with enterprise data pipelines

Implementation & Execution

As data volume increased, SDET Tech further optimized execution. For instance, the team implemented parallel execution (multithreading) to validate multiple tables simultaneously. Additionally, they executed tests using Databricks Workflows, where each batch ran as a separate pipeline with a dedicated cluster.

To ensure cost efficiency, the team took several steps. First, they analyzed and optimized cluster configurations. Second, they right-sized node usage based on workload requirements. As a result, they reduced overall compute costs without compromising performance.

Furthermore, the framework stored validation results in Delta tables with custom schemas. Consequently, the client gained transparent reporting, auditability, and easy analysis of data quality metrics. Thus, this improved confidence in downstream reporting and analytics.

Results & Impact

The transformation delivered significant, measurable outcomes for the client. Specifically:

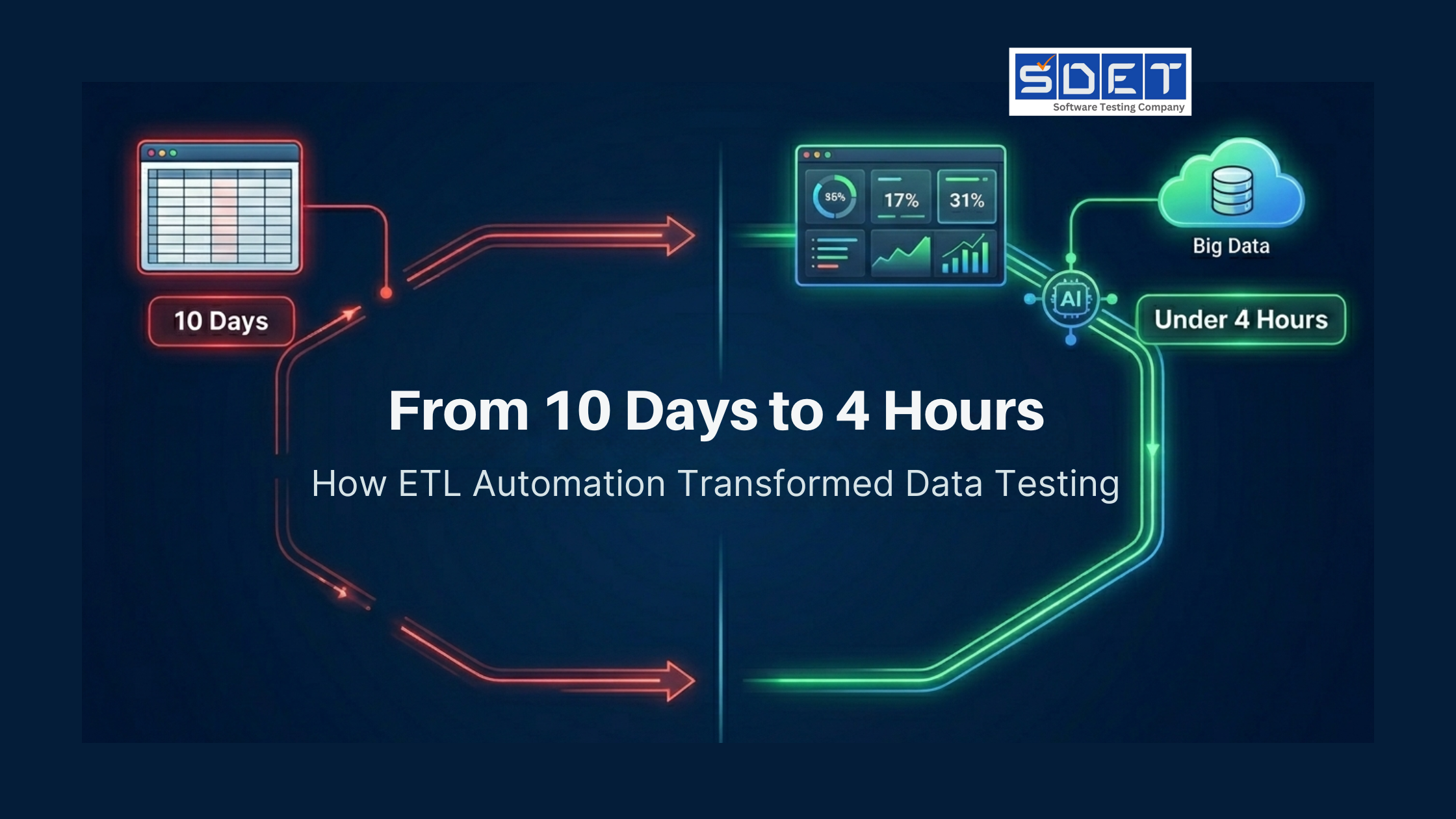

- Reduced ETL testing cycle time from 10 days to under 4 hours — a 60x acceleration.

- Achieved 3x reduction in manual QA effort, freeing up skilled engineers for higher-value work.

- Improved data accuracy and consistency across critical business pipelines.

- Accelerated release cycles and time-to-market.

- Delivered a reusable, enterprise-ready ETL testing framework that can be leveraged across projects.

- Reduced compute costs through optimized cluster configurations.

Therefore, the final framework allows reuse across projects by simply updating source tables, target tables, and transformation logic—no re-engineering is required.

Key Takeaways / Why It Worked

This success story demonstrates a clear blueprint for modernizing data quality assurance. Here is why it worked:

- Automation First: Replacing manual validation with intelligent automation eliminated bottlenecks and human error.

- Modular Framework Design: A clean, configuration-driven structure enables quick onboarding, easy extensibility, and reuse across multiple projects.

- Scalability by Design: Parallel execution and Databricks Workflows ensured the solution could handle growing data volumes without performance degradation.

- Cost Optimization: Right-sizing clusters and optimizing configurations delivered efficiency without compromising performance.

- Auditability & Transparency: Storing results in Delta tables provided clear visibility into data quality metrics, building trust in downstream analytics.

Client Perspective

Due to confidentiality, client quotes are not available for this engagement. Nevertheless, the measurable outcomes speak for themselves: a 60x reduction in test cycle time and a 3x reduction in manual effort transformed ETL testing from a delivery bottleneck into a scalable, trusted process.

The Bottom Line

By replacing manual ETL testing with an intelligent, automation-driven framework, this global enterprise achieved dramatic improvements in speed, reliability, and scalability. Moreover, the reusable framework eliminated re-engineering efforts, reduced costs, and established a foundation for trusted, high-quality data that powers confident business decisions.

Ready to Transform Your Data Quality Assurance?

Let’s discuss how our intelligent ETL testing solutions can help you accelerate cycles, reduce costs, and build trust in your data.

Contact Us Today →